Documentation Index

Fetch the complete documentation index at: https://platform.minimax.io/docs/llms.txt

Use this file to discover all available pages before exploring further.

The pages above walk through the most popular AI coding tools step-by-step. For other tools that accept a custom Base URL + API Key, use the values below.

Configuration Reference

MiniMax exposes two protocols. Pick whichever your tool supports — most modern tools support at least one.

OpenAI-Compatible Protocol

| Field | Value |

|---|

| Provider | OpenAI Compatible (sometimes called Custom or OpenAI-format) |

| Base URL | https://api.minimax.io/v1 |

| API Key | Get Token Plan Key |

| Model ID | MiniMax-M2.7 or MiniMax-M2.7-highspeed |

Anthropic-Compatible Protocol

| Field | Value |

|---|

| Provider | Anthropic Compatible (sometimes called Claude or Custom Anthropic) |

| Base URL | https://api.minimax.io/anthropic |

| API Key | Get Token Plan Key |

| Model ID | MiniMax-M2.7 or MiniMax-M2.7-highspeed |

Which Protocol Should I Pick?

| Tool style | Recommended protocol | Typical env vars |

|---|

| Claude Code-style (TUI / CLI written for Anthropic) | Anthropic-Compatible | ANTHROPIC_BASE_URL + ANTHROPIC_AUTH_TOKEN |

| Cursor / Continue / Aider / OpenAI-format IDE plugins | OpenAI-Compatible | OPENAI_BASE_URL + OPENAI_API_KEY |

| Tools that ask for both | Either works — Anthropic-Compatible is recommended for prompt-cache benefits | |

Cherry Studio

Cherry Studio is an open-source desktop client supporting 50+ LLM providers, with built-in MCP servers and 300+ assistants.

Add MiniMax provider

Open Cherry Studio → click Choose other Providers → search MiniMax, then pick MiniMax.

Enter API Key

Enter your Token Plan Key (the API Host is prefilled), then click Check to verify. Pick the model

Select MiniMax-M2.7 from the model list.

Claude Desktop

Claude Desktop is Anthropic’s official desktop app for Claude — chat, code, and Desktop Commander tools in one window.

Download the desktop client

Enable Developer mode

Sign in to Claude. From the macOS top menu bar, choose Help → Troubleshooting → Enable Developer mode — a new Developer menu appears.

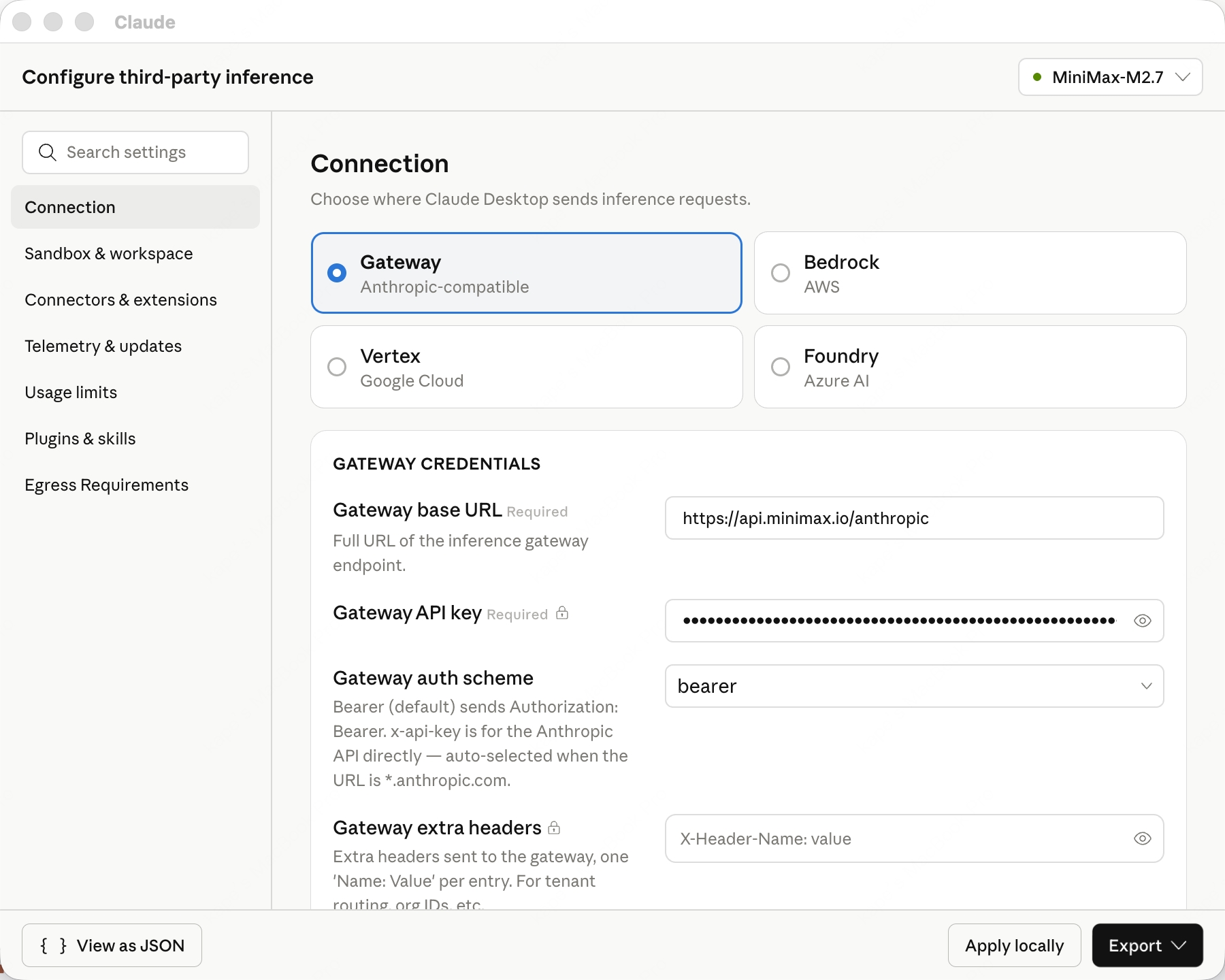

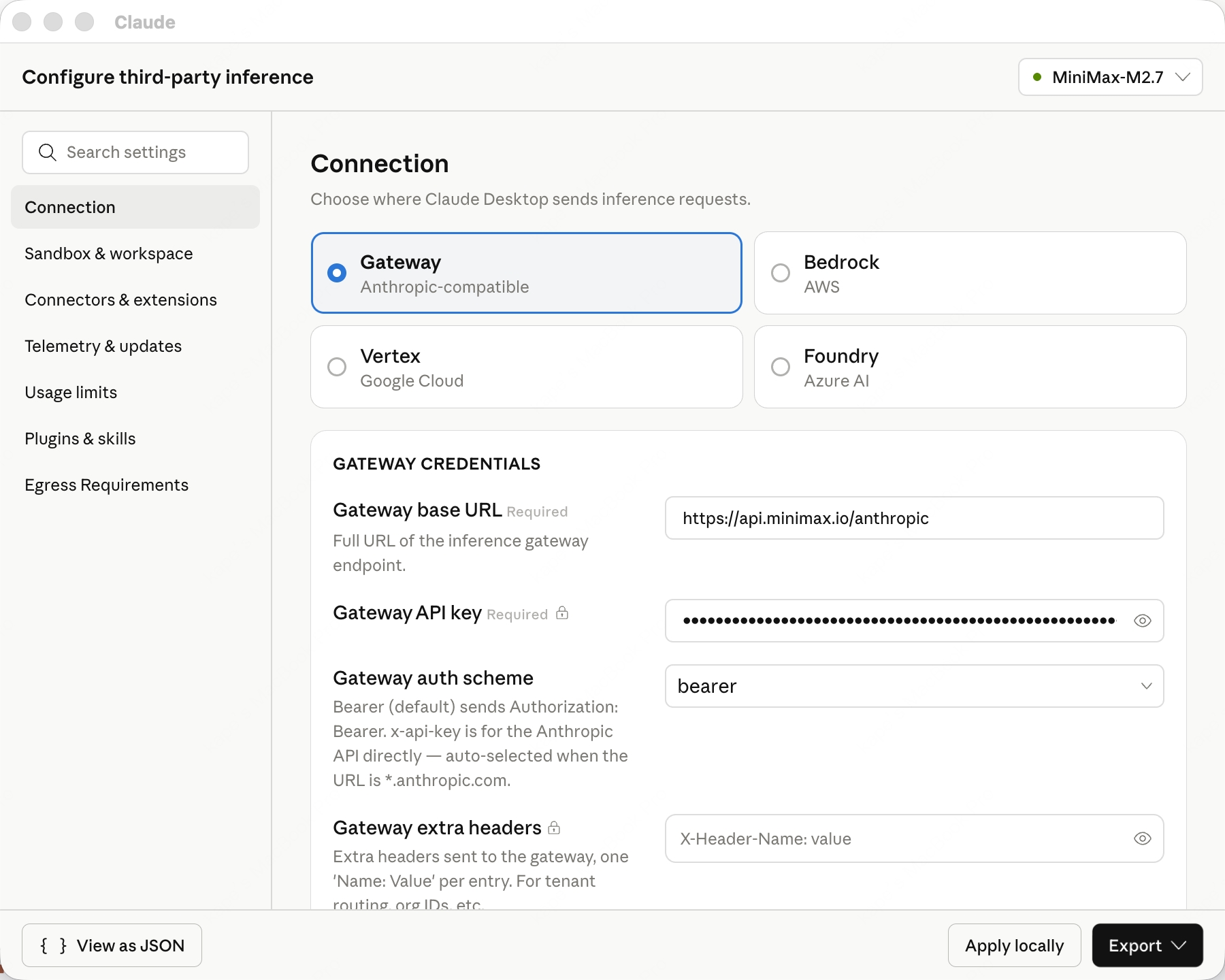

Configure third-party inference

Open Developer → Configure third-party inference → Connection and fill in these four fields:

- inferenceProvider:

gateway

- inferenceGatewayBaseUrl:

https://api.minimax.io/anthropic

- inferenceGatewayApiKey: your Token Plan Key (prefix

sk-cp-…, get one here)

- inferenceGatewayAuthScheme:

bearer

Restart and pick model

Click Apply locally → Relaunch now. After restart, pick MiniMax-M2.7 from the model picker in the bottom-right to start chatting.

Xcode

Xcode is Apple’s official IDE for macOS, iOS, iPadOS, watchOS, and visionOS, now with built-in Coding Intelligence.

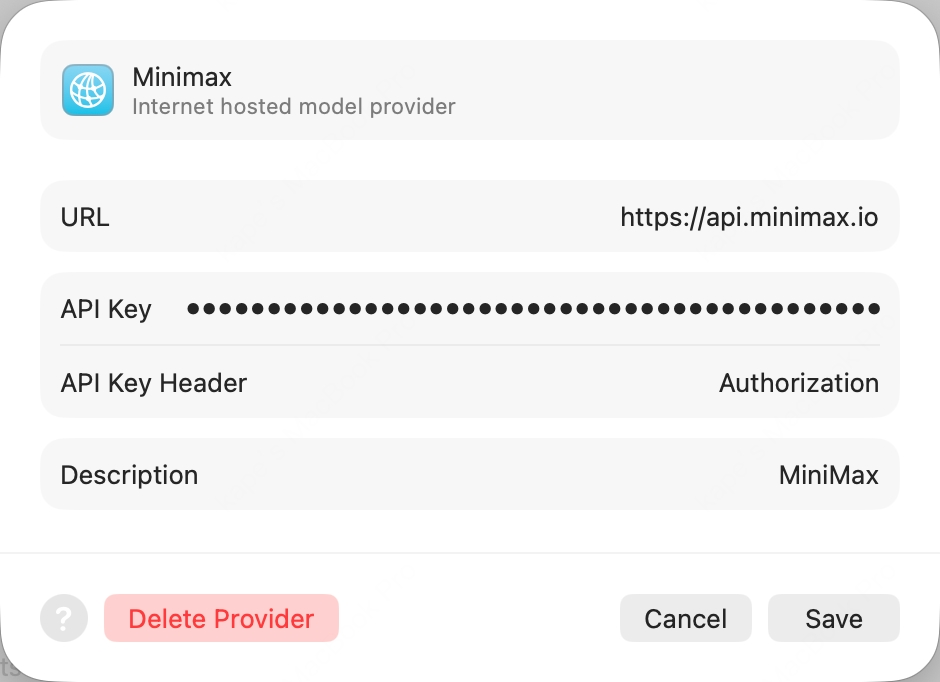

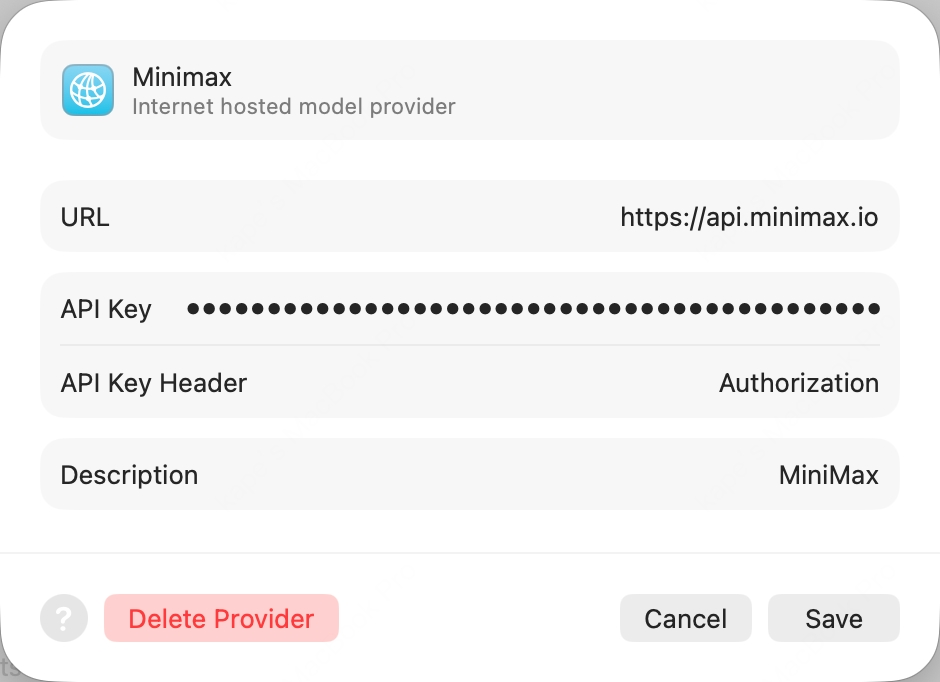

Add a Model Provider

Open Xcode → top menu Xcode → Settings → Intelligence → Add a Model Provider, choose the Internet Hosted tab, and fill in:

- URL:

https://api.minimax.io (bare host, no path)

- API Key Header:

Authorization (override the default x-api-key)

- API Key:

Bearer <your Token Plan Key> (the word Bearer, a single space, then your sk-cp-… key — get one here)

- Description:

MiniMax (any value)

Enable the model

Click Add. Back in the Intelligence pane, open the new MiniMax provider and enable MiniMax-M2.7.

Start chatting

Open any project, press ⌘+0 to bring up the Coding Assistant, click the edit icon at the top-left to pick MiniMax-M2.7.

Pi is an open-source TUI coding agent from earendil-works that talks to any provider through a unified LLM API.

Install Pi

npm install -g @earendil-works/pi-coding-agent

Set env var and launch

Export your Token Plan Key (prefix sk-cp-…) and launch Pi:export MINIMAX_API_KEY=sk-cp-...

pi --provider minimax --model MiniMax-M2.7

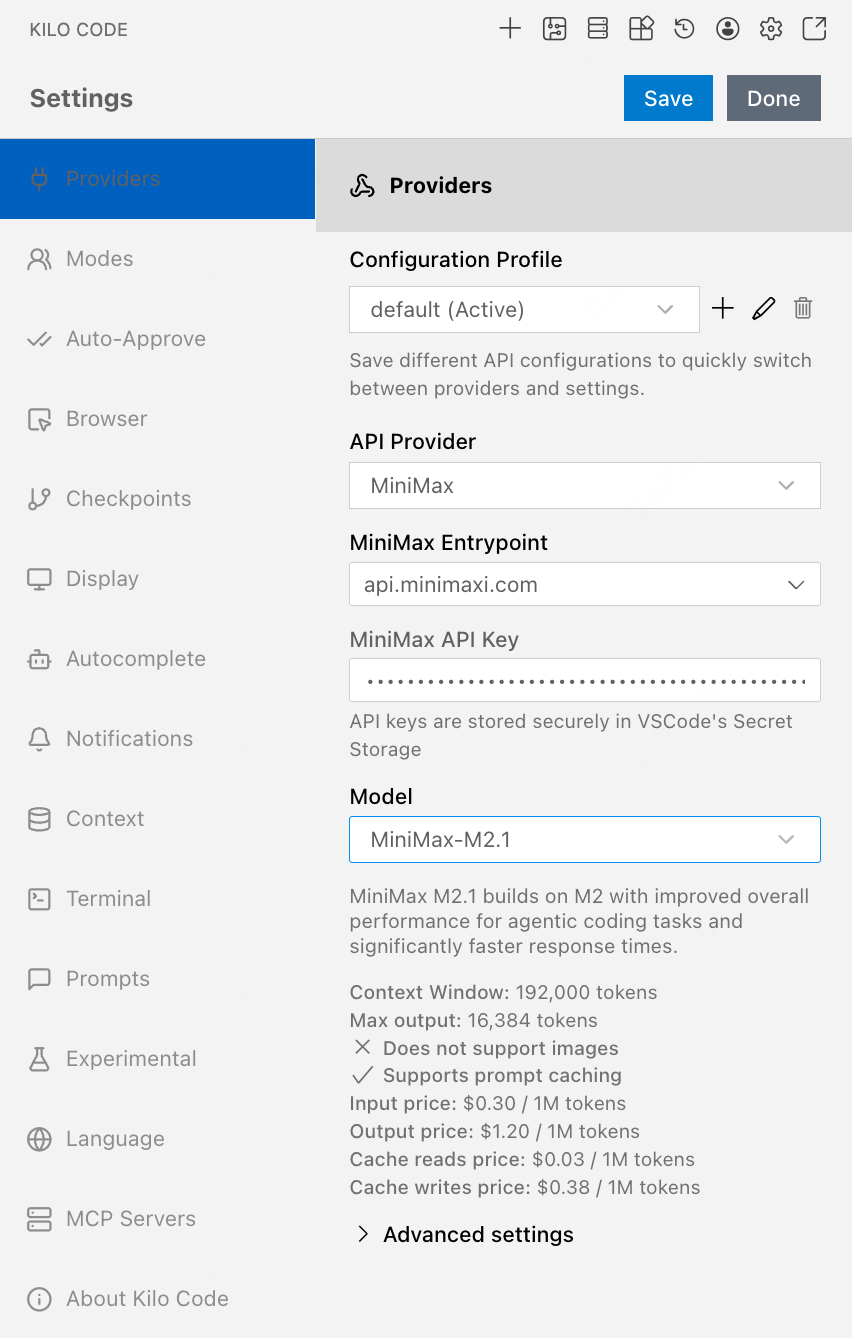

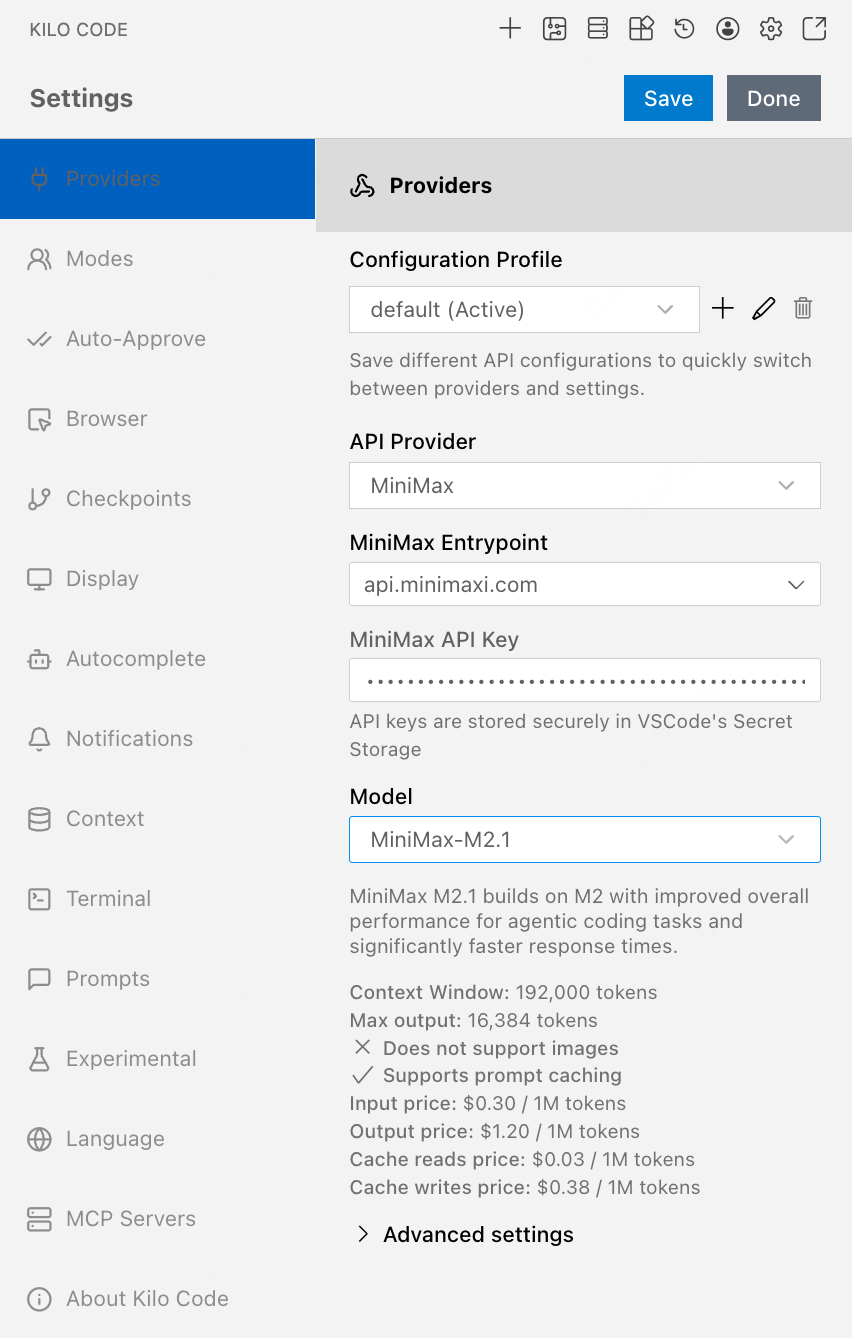

Kilo Code

Kilo Code is an open-source AI coding agent for VS Code, supporting multiple LLM providers and MCP servers.

First clear the ANTHROPIC_AUTH_TOKEN and ANTHROPIC_BASE_URL env vars — otherwise they will override the configuration.

Install the extension

Search Kilo Code in the VS Code Extensions panel and install.

Configure MiniMax provider

Open Kilo Code → Settings:

- API Provider =

MiniMax

- MiniMax Entrypoint =

api.minimax.io

- MiniMax API Key = your Token Plan Key

- Model =

MiniMax-M2.7

Click Save then Done.

Roo Code

Roo Code is an open-source VS Code extension with role-specialized AI coding agents (architect, coder, reviewer).

Install the extension

Search Roo Code in the VS Code Extensions panel and install.

Configure MiniMax provider

Open Roo Code → Settings, configure same as Kilo Code:

- API Provider →

MiniMax

- MiniMax Entrypoint →

api.minimax.io

- MiniMax API Key → your Token Plan API Key

- Model →

MiniMax-M2.7

Click Save then Done. Codex CLI

Codex CLI is OpenAI’s official terminal-based coding agent, written in Rust.

The latest Codex CLI has compatibility issues — version 0.57.0 is recommended.

First clear the OPENAI_API_KEY and OPENAI_BASE_URL env vars.

Edit config file

Edit ~/.codex/config.toml and add:[model_providers.minimax]

name = "MiniMax Chat Completions API"

base_url = "https://api.minimax.io/v1"

env_key = "MINIMAX_API_KEY"

wire_api = "chat"

requires_openai_auth = false

[profiles.m27]

model = "codex-MiniMax-M2.7"

model_provider = "minimax"

Set env var and launch

export MINIMAX_API_KEY=sk-cp-... # from Token Plan

codex --profile m27

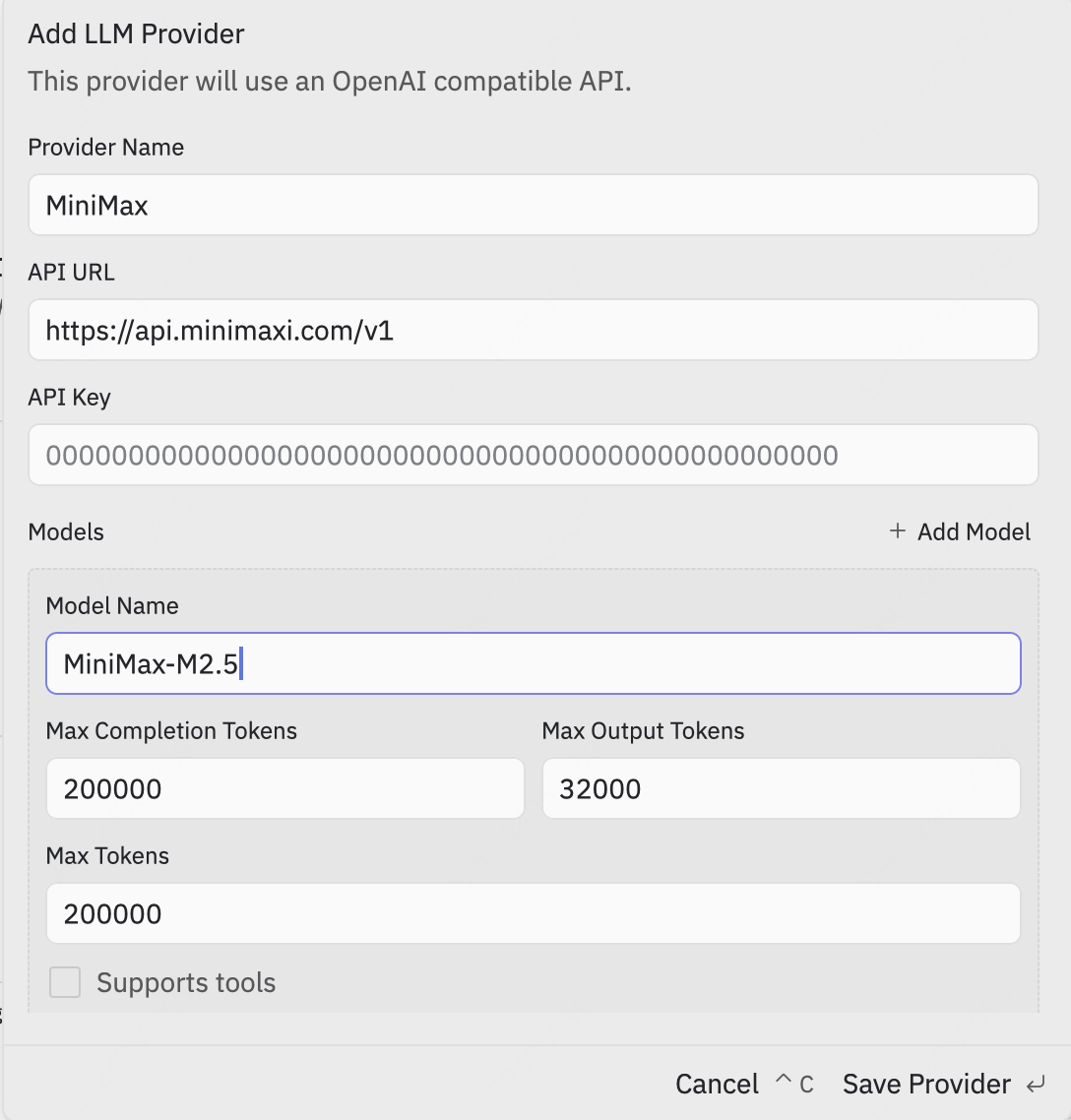

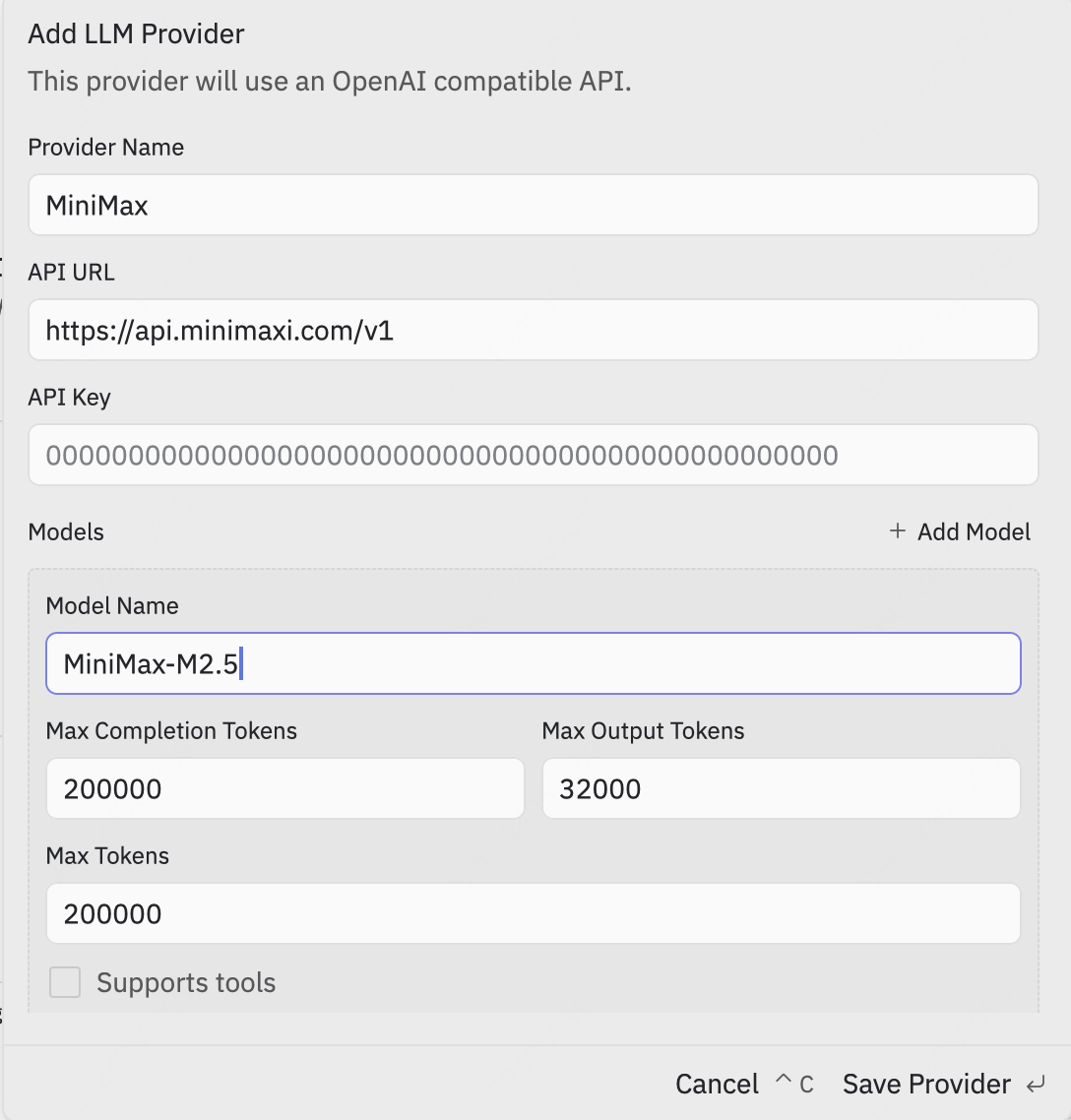

Zed

Zed is an open-source, high-performance multiplayer code editor written in Rust by the Atom creators.

Add LLM Provider

Settings → LLM Provider → +Add Provider → choose OpenAI, fill:

- API URL =

https://api.minimax.io/v1

- API Key = from Token Plan

- Model Name =

MiniMax-M2.7

Re-confirm API Key

Click Save Provider. Then back in the LLM Provider list, click the new MiniMax entry, re-enter the API Key and press Enter to confirm.

Pick the model

Return to the agent panel, click Select a Model at the bottom-right and pick MiniMax-M2.7.

OpenCode

OpenCode is an open-source terminal AI coding agent by SST, with multi-provider support and LSP integration.

OpenCode has built-in MiniMax-M2.7 support — no config file needed.

Install OpenCode

curl -fsSL https://opencode.ai/install | bash

# or: npm i -g opencode-ai

Authenticate

Run opencode auth login, then when prompted, search and pick MiniMax Token Plan (minimax.io), and enter your Token Plan API Key. Launch

Run opencode to start.

Grok CLI

Grok CLI is an open-source terminal coding agent that connects to xAI’s Grok and OpenAI-compatible providers.

Not recommended for Agent workflows — use Claude Code or Cursor for best results.

First clear the OPENAI_API_KEY and OPENAI_BASE_URL env vars.

Install Grok CLI

npm install -g @vibe-kit/grok-cli

Set env vars and launch

export GROK_BASE_URL=https://api.minimax.io/v1

export GROK_API_KEY=sk-cp-... # from Token Plan

grok --model MiniMax-M2.7

Droid

Droid is Factory’s official terminal coding agent that integrates with IDEs and developer collaboration tools.

You must first clear the ANTHROPIC_AUTH_TOKEN env var (otherwise it overrides the API Key in config.json). Note the config file path is ~/.factory/config.json (not settings.json).

Install Droid

curl -fsSL https://app.factory.ai/cli | sh # macOS / Linux

# Windows: irm https://app.factory.ai/cli/windows | iex

Edit config file

Edit ~/.factory/config.json:{

"custom_models": [{

"model_display_name": "MiniMax-M2.7",

"model": "MiniMax-M2.7",

"base_url": "https://api.minimax.io/anthropic",

"api_key": "<MINIMAX_API_KEY>",

"provider": "anthropic",

"max_tokens": 64000

}]

}

Launch and pick model

Launch droid, then /model and pick MiniMax-M2.7.

Qwen Code

Qwen Code is Alibaba’s open-source terminal coding agent optimized for the Qwen model family.

Install Qwen Code

Linux / macOS:bash -c "$(curl -fsSL https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.sh)"

powershell -Command "Invoke-WebRequest 'https://qwen-code-assets.oss-cn-hangzhou.aliyuncs.com/installation/install-qwen.bat' -OutFile (Join-Path $env:TEMP 'install-qwen.bat'); & (Join-Path $env:TEMP 'install-qwen.bat')"

Launch and pick provider

Run qwen in your terminal. Pick Third-party Providers → MiniMax API Key → region International.

Enter API Key

Enter your API Key from Token Plan (prefix sk-cp-…) and press Enter. Confirm model IDs

The default model IDs already include MiniMax-M2.7 and MiniMax-M2.7-highspeed — press Enter to submit and start chatting.

Open WebUI

Open WebUI is a self-hosted, open-source AI chat platform with offline support, RAG, and multi-model runners.

Install Open WebUI

Install via Python pip (requires Python 3.11 to avoid compatibility issues):pip install open-webui

open-webui serve

Add OpenAI Connection

Click the avatar (top-right) → Admin Panel → top Settings tab → left Connections. In the OpenAI row, click ➕ Add Connection, fill:

- URL:

https://api.minimax.io/v1

- Auth (Bearer mode): your API Key from Token Plan (prefix

sk-cp-…)

Pick the model

Click Save and back in the chat view, pick MiniMax-M2.7 from the top model selector to start chatting.

nanobot

nanobot is an ultra-lightweight open-source personal AI agent CLI from HKUDS, with chat channels, memory, and MCP support.

Install nanobot

uv tool install nanobot-ai

# or: pipx install nanobot-ai

# or: pip install nanobot-ai # may need --user or --break-system-packages on macOS

Configure MiniMax in one shot

Replace sk-cp-... with your Token Plan API Key:python3 -c '

import json, os, sys

p = os.path.expanduser("~/.nanobot/config.json")

c = json.load(open(p))

c["providers"]["minimax"]["apiKey"] = sys.argv[1]

c["providers"]["minimax"]["apiBase"] = "https://api.minimax.io/v1"

c["agents"]["defaults"]["provider"] = "minimax"

c["agents"]["defaults"]["model"] = "MiniMax-M2.7"

json.dump(c, open(p, "w"), indent=2)

' sk-cp-...

OpenHands

OpenHands (formerly OpenDevin) is an open-source AI software-development agent by All-Hands-AI, with TUI, web GUI, and IDE integrations.

Configure MiniMax in one shot

Replace sk-cp-... with your Token Plan API Key:pipx run --spec openhands python -c '

import sys

from openhands_cli.stores.agent_store import AgentStore

AgentStore().create_and_save_from_settings(

llm_api_key=sys.argv[1],

settings={"llm_model": "openai/MiniMax-M2.7",

"llm_base_url": "https://api.minimax.io/v1"},

)

' sk-cp-...

LangChain

LangChain is the open-source framework by LangChain Inc. for building LLM-powered applications, with model adapters, retrieval, agents, and tracing.

Install

pip install langchain-openai

Use MiniMax via the OpenAI-compatible adapter

Replace sk-cp-... with your Token Plan API Key:from langchain_openai import ChatOpenAI

llm = ChatOpenAI(

model="MiniMax-M2.7",

api_key="sk-cp-...",

base_url="https://api.minimax.io/v1",

)

print(llm.invoke("Hello").content)